Michael Fleischman is CTO and Co-founder of OpenSpace

In my last post, I talked about what it means to give AI agents eyes—to ground them in reality data so they can actually see what’s happening on a jobsite. Sometimes we describe this as bringing the field to the agent, and the issue review agent I described in that post is a good example: it can look at before-and-after images of an open issue and judge, on its own, whether the problem has been resolved.

But as powerful as it is to bring the field to agents, it’s even more powerful to bring agents to the field.

Because the most valuable moment on any construction project isn’t when someone sits down to review a report. It’s when someone is standing in front of actual work, in the actual space, right in front of them.

That’s the next frontier for agents with eyes: agents in the field.

The challenge with bringing agents into the field

Taking an agent from the office to the field is more than a UI change. It requires fundamentally changing what the agent needs to know.

Agents working in the field not only need eyes (i.e., the ability to see what’s happening on the jobsite), they need a sense of where they are. Because the questions that matter most on a jobsite—What was supposed to be installed in this room? What does this wall look like compared to last week?—can only be answered if the agent knows where “here” is.

In most of the world, this problem was solved decades ago via GPS. But GPS doesn’t work inside buildings.

GPS requires line-of-sight to satellites, and walls and ceilings block it. Hardware workarounds like Bluetooth beacons exist, but they’re expensive to deploy, hard to calibrate, and impractical on a construction site that’s changing every day. To get around this problem we had to find a new way to estimate location on a jobsite. We couldn’t find one out in the world, so we built our own.

AI Autolocation—GPS for the jobsite

We call it AI Autolocation, and it may be the biggest technical breakthrough we’ve had since we started OpenSpace. It requires no GPS, no external hardware (no beacons, no wireless access points). It’s built off of our 360 capture technology and all you need to use it is your smartphone.

Here’s how it works: every time a project teammate does a 360 capture with OpenSpace, the captured imagery is processed by our Spatial AI Engine and fused with sensor data from their smartphone to create what we call a sensor map: a rich, spatially indexed fingerprint of that location at that moment in time.

The next time a teammate enters that same space—even without a 360 camera, just the phone in their pocket—the system continuously compares the sensor data coming off their device to the sensor maps built from prior captures. It’s a matching problem, similar in spirit to how a music recognition app like Shazam can match a few seconds of audio to find what song it’s from. Except instead of identifying a song, we’re identifying where you are on the floor plan. In real time. With no external hardware.

The result: your location, tracked continuously, using nothing but the phone already in your pocket.

AI Autolocation learns to estimate your location without external hardware.

And crucially, this is a learning system. The more a site is captured, the better the location estimates get. The more teammates use it, the more the model learns how humans actually move through construction environments—and the more accurately it can predict location even in the ambiguous, half-built spaces that define an active jobsite. We’ve trained this model using our dataset of over 65 billion square feet of construction data, a dataset unlike any in the world. And using this model, our agents can estimate where they actually are on a jobsite.

And this is what makes agents in the field actually useful. Without it, an agent on your phone is just an agent on a smaller screen. With it, the agent actually knows where it is—and that changes everything.

Example field agent: the PE buddy

We’re calling one of the first field agents we’ve built the “PE buddy”—a personal project engineer that lives in your pocket and walks the site with you. Here’s what it does:

- As a project teammate moves through the site, their location is tracked in real time and shown in our mobile app as a moving dot on the floor plan.

- Every photo they take is automatically pinned to the exact spot on the drawing where they were standing when they took it.

- No more guessing where a photo was taken. No more unlabeled images floating in a shared folder with no context. Every photo has a location.

But the more interesting part is what happens when they talk.

The “PE buddy” field agent knows where you are on a jobsite and what you’re talking about.

The PE buddy transcribes everything the teammate says. But unlike a standard voice memo, each piece of that transcript is stamped with the time and the location where it was spoken. We call these “spatial transcripts” and they unlock a ton of value for workers in the field.

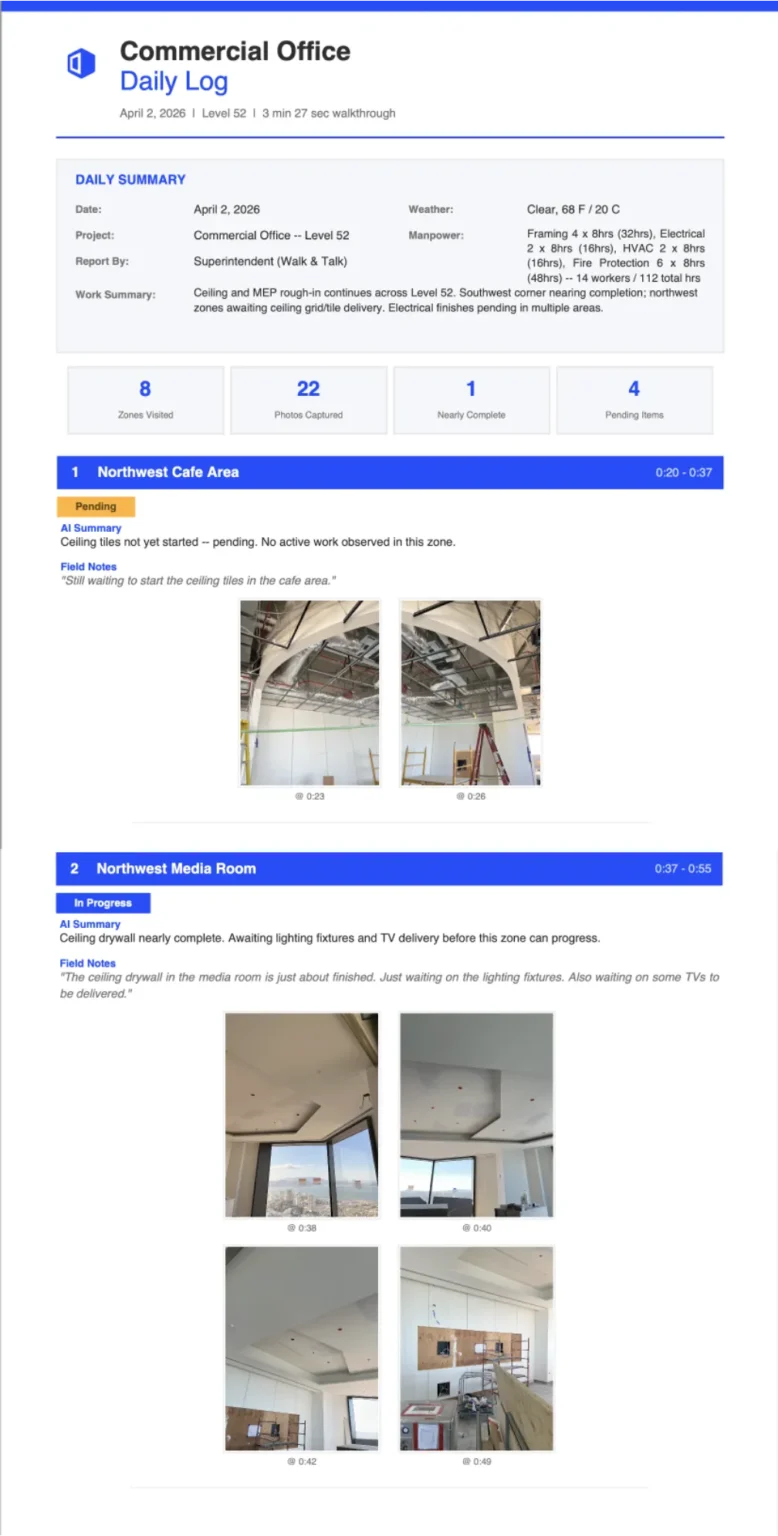

Think about what a superintendent does on a site walk: observing, narrating, making notes about what’s done, what’s wrong, what needs follow-up. With a spatial transcript, field agents can automatically organize those observations by location to feed punch list generation, progress notes, issue creation, or (my favorite use case) to automatically generate daily logs—formatted exactly the way the project requires—without writing a single word.

It’s like having a personal assistant follow you around the jobsite.

What comes next

An agent that knows where you are on the jobsite, and is ready to help as soon as you pull it out of your pocket. That’s what agents in the field look like today.

But agents running on your smartphone is just the beginning.

There’s a piece of hardware that’s quietly been making its way onto construction sites. Workers are already wearing them. It makes everything I’ve described in this post even more natural, more suited to the reality of how people actually work in the field. And they’re not waiting for some distant future. They’re already here, and their adoption is accelerating fast.

And we’ll be talking more about that very soon… 😎 Stay tuned.

Learn more about agents with eyes in the agentic age

Here are links to articles from our AI agent series: