Michael Jones leads Product for OpenSpace

A single OpenSpace 360° walk can produce thousands of panos. A typical project produces millions. Buried in those images is the answer to almost every question a builder really cares about: Did the work get done? Did it get done right? Is the jobsite safe?

That visual record is the most valuable raw material in construction, and at scale, making sense of it and turning it into clear, trustworthy answers is the single biggest thing customers are asking us to do.

This post is about how we’re doing it.

What customers want from agents

The number-one thing we’ve heard from customers while building our agent sandbox is “please don’t give me another dashboard.” Instead, they say “make sense of my captures.” Tell me what’s on track. Tell me what’s slipping. Tell me which open issues are actually closed. Tell me without making me click through a thousand panos to confirm.

This is a deceptively hard ask. The imagery is noisy. Cameras get bumped. Capturers move fast and turn frequently. Rooms look alike. And people rarely ask, “what’s in this one photo.” It’s “what’s true across all of them, right now, on this floor, this week.”

This is the problem we get up every morning to work on.

The flywheel that makes it possible

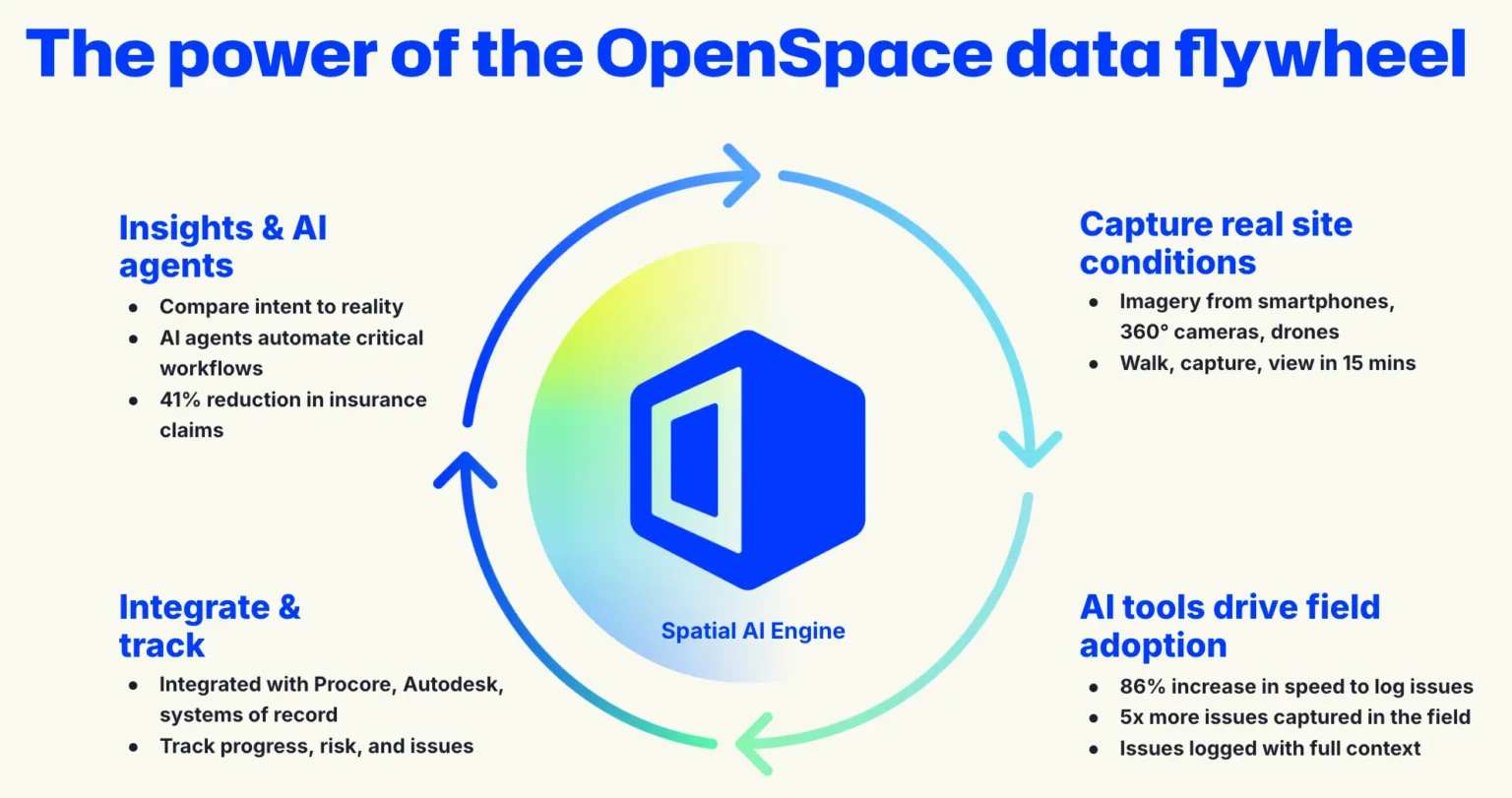

Solving the problem isn’t a single trick. It’s a flywheel, and we’ve been spinning it for the better part of a decade.

Builders capture real site conditions with smartphones, 360° cameras, and drones. Our AI capabilities drive field adoption by making the capture itself fast and useful. Walk, capture, view in about fifteen minutes; 86% faster issue logging; 5x more quality issues captured in the field, addressed faster. Integrations with systems like Procore and Autodesk deepen field adoption, so the data flows where work actually happens. And that integrated, spatially indexed data is what powers insights and AI agents that compare intent to reality and automate the work nobody enjoys doing manually.

Each turn of that wheel produces more of the one thing every agent in this industry needs: structured, verified, spatially indexed reality data. More than 65 billion square feet of it across 100,000 projects in 132 countries.

Why this is the right moment

The frontier AI labs are spending billions to make general-purpose vision models better, faster, and cheaper. We’re not trying to out-train them. We’re riding their wave.

There’s a moment in any new technology where the capability curve crosses a threshold and use cases go from “interesting demo” to “where has this been my whole life.” We think construction’s visual reasoning layer is approaching that moment now—and that 2026 is the year we cross it.

When that moment lands, the agents that make the difference are the ones grounded in the right data, sampled the right way, evaluated against the right benchmarks.

That is where our work goes. Quietly, and at a scale nobody else can match.

From images to intelligence to action

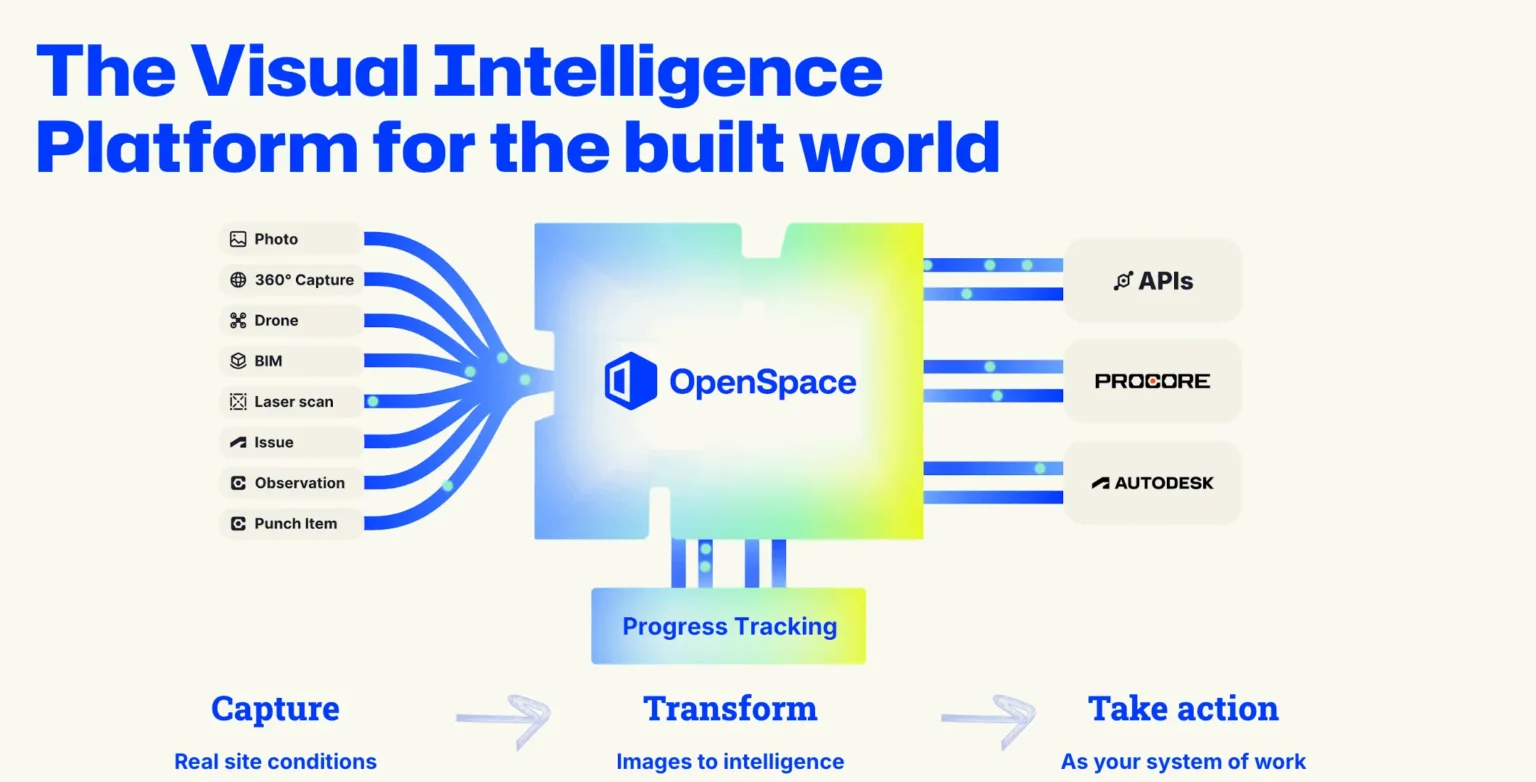

The other half of the answer is what happens after the agent reasons. An insight that doesn’t move work isn’t worth much on a jobsite.

We’ve spent the last year building the action layer: integrations with Procore and Autodesk, Field Notes, AI Autolocation, progress tracking, and the reporting that turns “the agent saw something” into “the team did something.” Capture. Transform. Take action. That’s our Visual Intelligence Platform, and it’s the reason an agent built on OpenSpace doesn’t stop at a sentence; it closes the loop.

For builders. For the industry.

For builders, this is how you’ll soon ask a real question about your job and get a real answer, grounded in what the camera saw, in seconds, in a form you can act on.

For partner platforms and investors, the durable position in this market isn’t the model and isn’t the dashboard. It’s the data flywheel underneath both. OpenSpace has the largest one in construction, and it’s still accelerating.

We don’t think this is going to be easy. We’ve been doing the hard part longer than anyone, and we’re ready for it.

Watch this space.